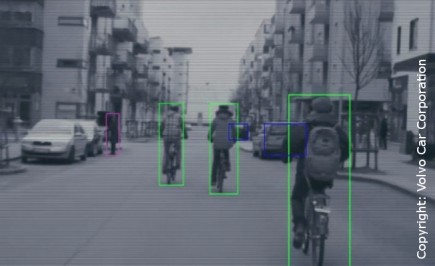

Modern automotive technology includes video cameras and automatic safety features, both autonomous systems and systems that include the driver. One critical element is to display the surrounding scene of the car as clearly as possible for the driver, with the help of cameras and advanced video processing, so that appropriate action can be taken. In a project together with Volvo Car Corporation, Chalmers, and Epsilon a video enhancement algorithm based on fusion of video signals from two different sources has been developed from initial concept to a fully functional demonstration implementation in hardware including Volvo Car Corporation’s next generation driver display.

Enhanced video display can be combined with automatic systems for detecting dangers and automatic countermeasures like auto-breaking. However, in many situations the best approach is to just issue a warning of a danger and let the driver take appropriate action. For this to be possible a clear, and sometimes artificially enhanced, view of the situation must be given.

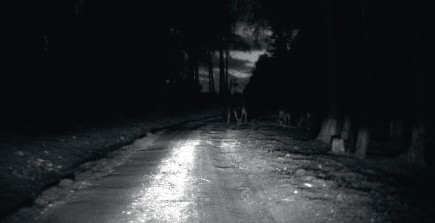

In many situations where dangerous events may occur, such as during night driving, or when strong light sources are present, the direct camera output is often of limited use and advanced real time post-processing is needed before presenting the video to the driver. In this way the quality of the response action can be improved, by giving the driver better information. Infrared cameras can be used to detect people or animals at night but to get a clear view of the entire situation further video enhancement is needed.

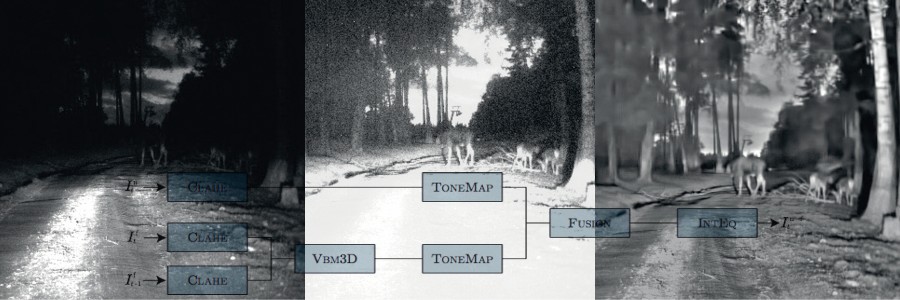

In a VINNOVA FFI-project carried out in cooperation with Volvo Car Corporation, Epsilon Embedded, and Chalmers, FCC has developed an automatic algorithm for real time video enhancement based on fusion of regular and infrared video signals. The improvement is dramatic and makes it possible for the driver to get an enhanced view of both the road and its surroundings, even given very poor conditions in complex environments. The algorithm can handle changing conditions and automatically combines improved image quality in dark areas and in areas flooded with bright light. The video enhancement algorithm consists of a combination of among other things: local adaptive contrast enhancement, noise reduction, light normalization, and fusion of video streams with and without infrared flash. Several state of the art algorithms were initially evaluated but the resulting videos were found to be of insufficient quality. This called for a novel approach, using a combination of available methods and custom developed algorithms. Key components include Contrast Limited Adaptive Histogram Equalization (CLAHE), Video Block Matching 3D (VBM3D) and tone-mapping. Histogram Equalization is a well established contrast enhancement technique. Contrast Limited Adaptive Histogram Equalization (CLAHE) is a version of this technique that is local in nature with less amplification of noise.

When amplifying dark video and enhancing contrast, noise is also amplified, and noise reduction is necessary. The noise reduction in this case is mainly the result of a sub-algorithm known as VBM3D (Video Block Matching 3D), which works by identifying similar small parts of an image from the current or neighboring frames of the video, building a 3D structure of those image parts and then performing a transform based noise reduction before writing processed parts back into the image.

Using an infrared flash gives better image information in dark areas but well lit areas will actually become worse. Therefore the method utilizes two video streams, one with flash and one without. The two streams are combined by using a continuously updated weight map of what parts of the image are bright and what parts are dark. Finally, object contrast is enhanced, making dangers visible and artificial light-condition-objects are removed (for example the bright oval cast by the headlights). In order to do this, light patterns that are stable as the car moves are calculated and the image is then normalized based on these light patterns. This removes the oval cast by the headlights but preserves real objects.

First, all images undergo contrast enhancement (CLAHE). Next, noise reduction (VBM3D) is performed, which focuses on the flash video stream. This is motivated by its use for the darker areas, where large noise is present. Note also that the noise reduction uses not only the current image but also the previous image. Then there is a tone mapping step, which performs an overall amplification of darker areas. After that the two video streams are fused into one and finally, stable brightness patterns like headlights are removed in an intensity equalization step. The results were presented both internally at Volvo Car Corporation and in different forums like Innovation Bazaar, a series of local networking events at Chalmers Lindholmen arranged by Vehicle ICT Arena, and at Transportforum, the main Nordic conference for the transportation sector, and has been very well received by the automotive industry.

Partners: VINNOVA (FFI 2009-00071), Volvo Car Corporation, FCC, Epsilon, and Chalmers.